Anthropic API works great — but it's not cheap. And what happens when you're in a region with limited access, or you just want to see how Claude Code behaves with a different brain underneath? I started testing alternative providers. Here's what actually works.

How it works

Claude Code respects the ANTHROPIC_BASE_URL environment variable. Point it at any OpenAI-compatible endpoint and it'll route all calls there. ANTHROPIC_AUTH_TOKEN (or ANTHROPIC_API_KEY) carries whichever key the new provider issued you. The model mapping variables tell Claude Code which model name to actually send in requests.

The catch: Claude Code relies heavily on tool use — file reads, shell commands, git operations. If the provider doesn't implement Anthropic's tool calling protocol faithfully, Claude Code degrades to a fancy chatbot. Still useful, just not agentic.

Configuration

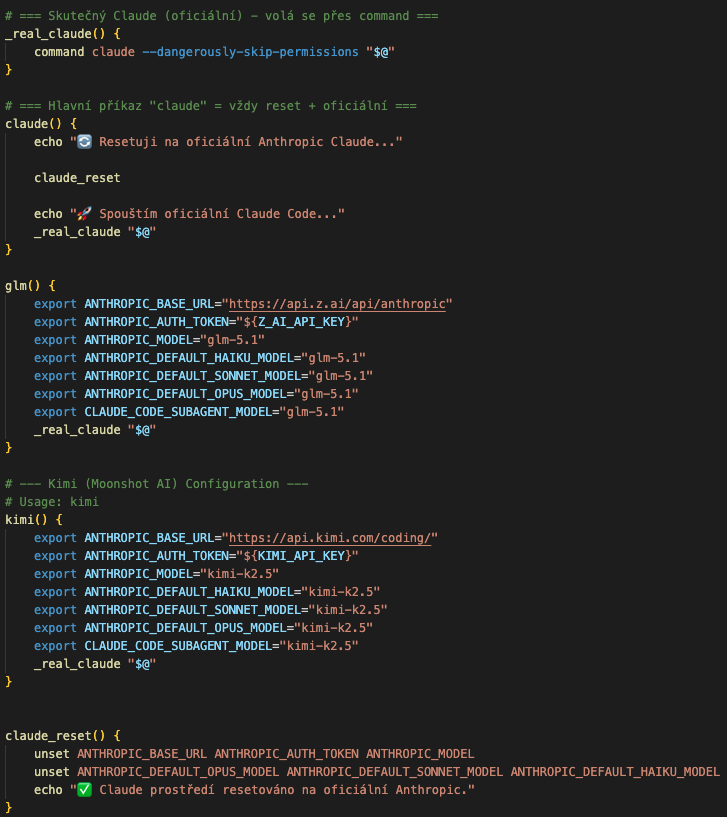

The screenshot below shows the exact setup — env vars in ~/.claude/settings.json or exported in your shell before launching claude:

GLM-5.1 via Z.AI

Z.AI (智谱 AI) provides an Anthropic-compatible endpoint. Register at z.ai, grab your API key, then set these exact variables (from the Z.AI docs at docs.z.ai/devpack/tool/claude):

export ANTHROPIC_AUTH_TOKEN=<your-z.ai-api-key>

export ANTHROPIC_BASE_URL=https://api.z.ai/api/anthropic

export ANTHROPIC_DEFAULT_OPUS_MODEL="GLM-5.1"

export ANTHROPIC_DEFAULT_SONNET_MODEL="GLM-5.1"

export ANTHROPIC_DEFAULT_HAIKU_MODEL="GLM-4.5-Air"

export API_TIMEOUT_MS=3000000Or run their one-liner setup script: curl -O "https://cdn.bigmodel.cn/install/claude_code_zai_env.sh" && bash ./claude_code_zai_env.sh — it writes these values into ~/.claude/settings.json automatically. Verify everything landed correctly with /status inside Claude Code.

Result: basic conversation and code reading worked well. Tool use was functional. The context window is generous and GLM-5.1 is noticeably more capable than earlier versions for multi-step reasoning tasks.

OpenRouter + Qwen 3 (6B)

OpenRouter acts as a unified gateway to hundreds of models. The configuration is slightly different — you need to explicitly blank out ANTHROPIC_API_KEY, otherwise Claude Code tries to use it instead of the OpenRouter key. From the OpenRouter docs (openrouter.ai/docs/guides/coding-agents/claude-code-integration):

export OPENROUTER_API_KEY="<your-openrouter-api-key>"

export ANTHROPIC_BASE_URL="https://openrouter.ai/api"

export ANTHROPIC_AUTH_TOKEN="$OPENROUTER_API_KEY"

export ANTHROPIC_API_KEY="" # Must be explicitly empty

export ANTHROPIC_DEFAULT_OPUS_MODEL="qwen/qwen3-6b"

export ANTHROPIC_DEFAULT_SONNET_MODEL="qwen/qwen3-6b"

export ANTHROPIC_DEFAULT_HAIKU_MODEL="qwen/qwen3-6b"Alternatively, create .claude/settings.local.json in your project — this keeps provider config per-project without polluting your shell:

{

"env": {

"ANTHROPIC_BASE_URL": "https://openrouter.ai/api",

"ANTHROPIC_AUTH_TOKEN": "<your-openrouter-api-key>",

"ANTHROPIC_API_KEY": ""

}

}Important: don't use a regular .env file for these. Claude Code doesn't read standard .env files — use settings.local.json or export directly in your shell.

Result: Qwen 3.6 through OpenRouter was capable. Tool use worked via OpenRouter's protocol translation. Latency was reasonable. Cost-wise — significantly cheaper because free preview used.

Setup Openrouter for Claude Code

Kimi (Moonshot AI)

Moonshot AI's Kimi with up to 128k token context. OpenAI-compatible endpoint at api.moonshot.cn/v1. Basic conversation worked, tool use was unreliable. The long context is genuinely useful for reading large codebases, but without stable tool use it's limited.

Observations

What works regardless of provider: reading code, explaining logic, conversation. What depends on tool calling implementation: writing files, running shell commands, git operations — the core of what makes Claude Code an agent, not just a chat interface.

If a provider doesn't implement Anthropic's tool use format faithfully, you lose agentic capabilities. Z.AI and OpenRouter (with the right models) handle this best. Raw OpenAI-compatible endpoints without tool protocol support give you a smart assistant, not an autonomous coding agent.

Bottom line

Switching providers makes sense in two cases: you're testing how different models approach your specific codebase, or you need a cost-effective alternative for lighter tasks. For full agentic operation with tool use, Z.AI (GLM-5.1) and OpenRouter are the most reliable alternatives to native Anthropic right now. The LiteLLM proxy approach with OpenAI is close but requires extra infrastructure.

Run /status after setup to confirm Claude Code picked up the right model — saves debugging time when something silently falls back to defaults.